Going serverless with Azure Functions

Note: This article has been edited to add and update content regarding Durable Functions.

Digital transformation has made waves in many industries, with revolutionary models like Infrastructure as a Service (IaaS) and Software as a Service (SaaS) that make digital tools much more accessible and flexible, allowing you to rent the services you need rather than taking on large commitments of owning them. Functions as a Service (FaaS) takes on the same model; if we think about digital infrastructure in terms of storage boxes, this is a framework that allows us to rent storage as we need it. Rather than owning and managing everything through a private cloud, organizations can go serverless with one of many cloud service providers like Microsoft Azure, Amazon Web Services (AWS), and Google Cloud. This allows us to develop and launch applications without having to build and maintain our own infrastructure, which can be costly and time-consuming.

Serverless computing started with Amazon Web Services (AWS) in 2014, with competitors like Google and Microsoft quickly catching up (Thomas Koenig from Netcentric hosted a talk on AWS serverless functions). The technology has since improved rapidly, with industry leaders continually pushing for innovation in their functionality. Today, many of our enterprise clients are using Azure, and Azure Functions has developed functionality to rival top competitors like AWS Lambda. So let’s dive into Azure Functions as a case study of how serverless computing can benefit your business with efficient and cost-effective solutions.

An overview of Azure Functions

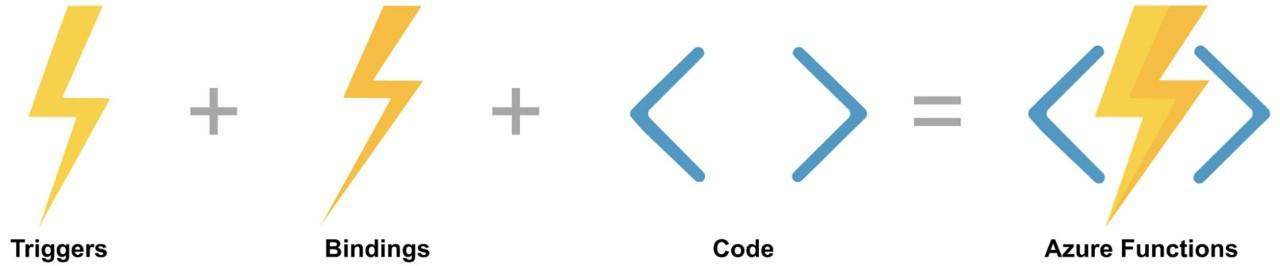

Azure Functions is an event-based, serverless computing platform that accelerates app development, offering a more streamlined way to deploy code and logic in the cloud. These are the main components of an Azure function:

1. Events (Triggers)

An event is required to trigger a function to execute; Azure simply refers to these as triggers. There are many types, with the most common triggers being HTTP and webhooks, where functions are invoked with HTTP requests and respond to webhooks. There are also blob storage triggers, which trigger when a file is added to a storage container, and timer triggers, which can be configured to trigger in specified timeframes.

2. Data (Bindings)

Then, we have data, which is pulled in prior to and pushed out after executing a function. In Azure, these are called bindings and there can be multiple bindings per function. Bindings help reduce boilerplate code and make development more efficient by avoiding data connectors.

3. Code & Configuration

Finally, there is code and configuration for the functions. Azure supports C#, Node.js, Python, F#, Java, and PowerShell. You can also bring your own custom runtime for more complex, nuanced cases.

Setting up Azure Functions for your team

One thing to keep in mind is that functions running in the cloud are inactive until initialized. This is what we call a cold start. Microsoft offers different hosting plans that can help mitigate this potential issue:

1. Consumption Plan: This is essentially a pay-as-you-go plan, and the default serverless plan. You’re only charged for the resources used when your functions are running.

2. Premium Plan: This plan includes the same auto-scaling based on demand as in the Consumption Plan, but with the added benefit of using pre-warmed or standby instances. This means functions are already initialized and waiting to be triggered, which helps mitigate the cold start issue for organizations that need real-time responses.

3. App Service Plan: With this plan, virtual machines are always running, so you never have to worry about cold starts. This is ideal for long-running operations, or when more predictive scaling and costs are required.

How to use functions effectively

First and foremost, there are a few rules of thumb to follow when writing functions:

- Functions should do one thing.

- Functions should be idempotent – meaning they can be scaled in parallel.

- Functions should finish as quickly as possible (Note: Azure’s Consumption Plan limits function runtime to 10 minutes).

When your setups become more complex than small functions executing singular tasks, Azure offers Durable Functions. These further enable you to chain functions, to fan-in and fan-out to spread the execution of your functions and to set up other patterns.

Durable Functions

Durable functions extends Azure Functions and uses Durable Task Framework to provide stateful functions in a serverless environment. In our current projects, we’re employing Durable Functions more and more for tasks that have long runtimes and need to be managed in stateful workflows that keep track of process history.

The biggest lesson we’ve learned while programming Durable Functions is that the orchestration function code must be deterministic, because it’s replayed on every rehydration - rebuilding state that uses history. This usage of the Event Sourcing pattern means there are some constraints that need to be addressed when working with Durable Functions. Additionally, the engineer should purge the Durable Functions instance history. This can be done by: 1) implementing additional Azure Function and purging history via SDK, 2) purging history via an API, or 3) doing it manually in the Azure Portal, as the framework doesn’t provide out-of-the-box functionality. Otherwise, having many long-running Durable Functions will take up significant storage in your account.

What we’ve discovered is that besides using Azure Storage Explorer to monitor Durable Functions, it’s also very handy to install the Durable Functions Monitor extension that lets you list, monitor, and debug your Durable Functions in VS Code. With its bird’s eye view and intuitive interface, we’ve been able to speed up our development and understand the mechanics behind Durable Functions.

Example: Batch importing product data using Azure Functions

In a recent search project, we needed to import product data from the Product Information Management (PIM) system to the ElasticSearch search engine. Since the batch import would run daily, we used Azure Functions with the Consumption plan to pay just for the execution time during the import. The cold start issue wouldn’t be critical, since we didn’t require fast initial responses during the batch import.

- Every day, the PIM exports, compresses, and uploads product data into Azure Blob Storage.

- The process is scheduled with the Timer trigger, where you assign an event to trigger the Durable Function orchestration function.

- The Orchestration Function calls an action function that lists all needed zips under a certain path, then passes these zip names to the Unzip Function in a loop. The Durable Function executes the Unzip Function instances in parallel.

- The Unzip Function reads zips from the product-import container in the Azure Blob Storage. Every zip is extracted to a ~1GB JSON file and uploaded to a new Blob Storage container by the Unzip Function.

- The Import Function is binded to the product-process container and is triggered once the product data in JSON format is uploaded. It parses product JSONs, applies the business logic flow, and sends product data to the Elasticsearch for indexing.

This is one example of how we can set up a powerful, streamlined solution with Azure Functions. It’s fast to implement, saves an organization time and effort, and costs only a few euros a month to run on the Consumption plan.

Azure Functions is continually evolving and is open source, making it easy to stay up to date on the latest features and exchange best practices and examples with the developer community. In our experience working with enterprise clients, many are already using Microsoft Azure as a Cloud Service Provider, which makes Azure’s serverless capabilities, fast business logic implementation, and pay-as-you-go pricing a no-brainer to integrate into their tech stack. We can work with these teams to implement new solutions faster in the cloud with FaaS, and the transparent and pay-as-you-go pricing models are just the cherry on top. Azure Functions is a powerful tool that offers many types of configuration for organizations with different needs; it’s wise to work with an experienced team to tailor the right solution for you.